DSS Benchmark

NOTE : In Swingbench 2.4 the following isn’t necessary. There is a wizard “shwizard” in the bin directory that will walk you through the entire SH schema creation. The following is only necessary for 2.3.

Swingbench 2.3 includes a new benchmark capable of generating a more I/O intensive workload. Its based on the “Sales History” schema that ships with Oracle 10g/11g. Based on how much data is generated this benchmark should generate a large amount of disk activity in comparison to the “Order Entry” and “Calling Circle” benchmarks. Currently little tuning has been done against this benchmark and the layout for the storage/partitioning is sub optimal, this of course can be be easily rectified by an experienced DBA. The schema also doesn't use any materialized views on the basis that this would reduce I/O by a significant amount, these are likely to included as an option at some stage in the future.

The installation is a pretty good test of a servers CPU and Memory in its own right.

Generating the data

To install and run the DSS benchmark you'll need both Datagenerator to create the data and Swingbench to generate the load. The installation guide for swingbench can be found here. The installation guide for datagenerator can be found here.

The “SH” schema can be installed manually or via the graphical front end or command line. However the simplest and consise mode is to use the scripts located in the $DATAGEN_HOME/bin/scripts/sh directory.

These scripts can be run directly from SQL*Plus as the “SYS” or “SYSTEM” user. Start the installation by running the following command

[dom@etcpro01 sh]$ sqlplus / as sysdba @sh_install

SQL*Plus: Release 11.1.0.6.0 - Production on Mon Sep 1 11:23:41 2008

Copyright (c) 1982, 2007, Oracle. All rights reserved.

Connected to:

Oracle Database 11g Enterprise Edition Release 11.1.0.6.0 - 64bit Production

With the Partitioning, Real Application Clusters, Oracle Label Security, OLAP,

Data Mining, Oracle Database Vault and Real Application Testing options

Sales history username [SH] :

Sales history password [SH] :

Default tablespace [SH] :

Default index tablespace [SH_INDEX] :

Enter scale. 1 = 1GB, 100=100GB etc [1] : 100

Enter connectstring for database [//etcpro01/PLLR] :

Enter level of parallelism [4] : 24

Generation will now begin. Press return to continue or control C to exit

The script will prompt you for the schema and the password (the user will be created). Hitting return at any stage will accept the default. The default and default index tablespace are the locations into which the data and indexes should be placed. The scale indicates how big the utlimate data set will be. The default config file is sized for 1GB and so a scale of 100 will create 100GB of raw data. The connectstring is used by the datagenerator and should be of the form

//<hostname>/<service>

The final parameter is the level of parallelism to be used. This is typically two times the number of cores in the server.

After the last question is asked, hitting return will begin the schema creation.

The time taken to create the data will depend on the power of the CPU’s and the “scale” specified. The following table indicates shows timings for a 100 scale build. I’ll also include 1 and 10TB builds when I get time on hardware.

| Process |

10GB (hours:min:secs) |

100GB (hours:min:secs) |

|---|---|---|

| Tablespace Creation |

00:02:27

|

00:18:44

|

| Data Generation |

00:15:56

|

02:47:39

|

| Index Builds |

00:18:15

|

02:15:36

|

| Schema Analysis |

00:09:16

|

00:22:47

|

The timings were taken on an unspecified 4 CPU dual core server with a mid range SAN (approx 400MB/sec of I/O).

The sizings for the 10GB tablespaces are

|

SH

|

SH_INDEX

|

||||||||

|---|---|---|---|---|---|---|---|---|---|

|

|

I’d be interested in other users timings and configurations and would be happy to post them to a page purely as a reference points. Please send them to me via the comments page here

Running the benchmark

Swingbench now includes a new configuration and java classes to run a load against the “Sales History” schema, this is located in the “$SWINGBENCH_HOME/bin/sample directory”. To launch swingbench with the new configuration file type

$> ./swingbench -c sample/shconfig.xml

from the bin/winbin directory.

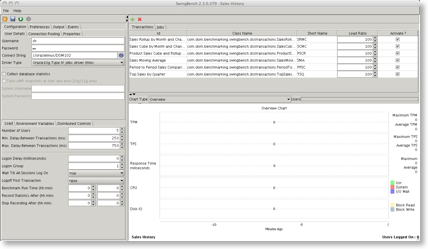

It can also be loaded after the initial launch of swingbench. This will launch the following dialogue

Check that the connect string matches your database and press play. Depending on the size of your database it may take a while for the transactions to start registering results.